First, What is tor ?

Tor, short for The Onion Router, is free and open-source software for enabling anonymous communication. It directs Internet traffic through a free, worldwide, volunteer overlay network, consisting of more than six thousand relays, for concealing a user’s location and usage from anyone conducting network surveillance or traffic analysis. Using Tor makes it more difficult to trace the Internet activity to the user. Tor’s intended use is to protect the personal privacy of its users, as well as their freedom and ability to conduct confidential communication by keeping their Internet activities unmonitored.

Source : Wikipedia

Questions you should clarify before configuring a Tor service

- Do you want to run a Tor exit or non-exit (bridge/guard/middle) relay?

- If you want to run an exit relay: Which ports do you want to allow in your exit policy? (More ports usually means potentially more abuse complaints.)

- What external TCP port do you want to use for incoming Tor connections? (“ORPort” configuration: We recommend port 443 if that is not used by another daemon on your server already. ORPort 443 is recommended because it is often one of the few open ports on public WIFI networks. Port 9001 is another commonly used ORPort.)

- What email address will you use in the ContactInfo field of your relay(s)? This information will be made public.

- How much bandwidth/monthly traffic do you want to allow for Tor traffic?

- Does the server have an IPv6 address?

What is a bridge?

Bridge relays are Tor relays that are not listed in the public Tor directory.

That means that ISPs or governments trying to block access to the Tor network can’t simply block all bridges. Bridges are useful for Tor users under oppressive regimes, and for people who want an extra layer of security because they’re worried somebody will recognize that they are contacting a public Tor relay IP address.

A bridge is just a normal relay with a slightly different configuration. See How do I run a bridge for instructions.

Several countries, including China and Iran, have found ways to detect and block connections to Tor bridges. Obfsproxy bridges address this by adding another layer of obfuscation. Setting up an obfsproxy bridge requires an additional software package and additional configurations. See our page on pluggable transports for more info.

Source : https://support.torproject.org/censorship/censorship-7/

Why using docker ?

Docker use OS-level virtualization to deliver software in packages called containers, using that technology is more secure if you are planning to host other services on the same host as well.

In this case we are using “docker-compose” for convenience, with one file we can deploy the full Tor-bridge with minimal effort and the same efficiency as in plain docker.

How to host a bridge using docker-compose

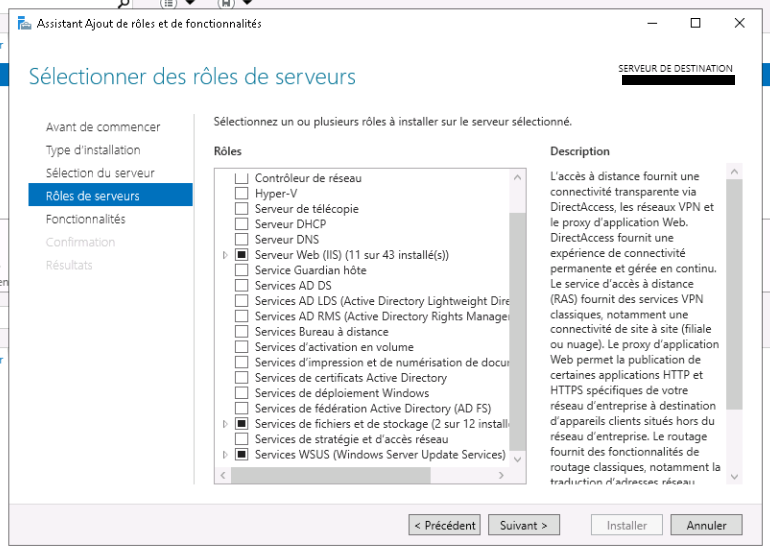

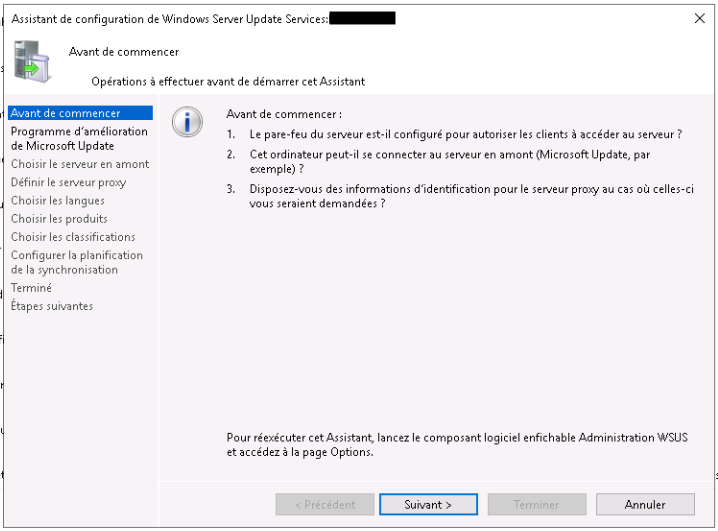

To host a Tor bridge container, your first need to have a docker & docker-compose installed. Then make sure you select two public ports and forward them to your Tor bridge server if you are using NAT based network environment.

Create two following files in the same directory. Make sure to change your environment variable in the .env file to match your current setup.

Make sure to uncomment variables to use them properly, make also sure to not edit the docker-compose volumes structure, or if you want to do so, check the link below.

docker-compose.yml

version: "3.4"

services:

obfs4-bridge:

container_name: obfs4-bridge

image: thetorproject/obfs4-bridge:latest

environment:

# Exit with an error message if OR_PORT is unset or empty.

- OR_PORT=${OR_PORT:?Env var OR_PORT is not set.}

# Exit with an error message if PT_PORT is unset or empty.

- PT_PORT=${PT_PORT:?Env var PT_PORT is not set.}

# Exit with an error message if EMAIL is unset or empty.

- EMAIL=${EMAIL:?Env var EMAIL is not set.}

env_file:

- .env

volumes:

- data:/var/lib/tor

ports:

- ${OR_PORT}:${OR_PORT}

- ${PT_PORT}:${PT_PORT}

restart: unless-stopped

volumes:

data:

name:

tor-datadir-${OR_PORT}-${PT_PORT}.env

# This file assists operators in (re-)deploying an obfs4 bridge Docker container. You need the tool 'docker-compose' to use this file. You can find it in the Debian package 'docker-compose'.

First, you need to create a configuration file, ".env", in the same directory as this file, "docker-compose.yml". Add the following environment variables to this configuration file. EMAIL is your email address; OR_PORT is your onion routing port; and PT_PORT is your obfs4 port:

EMAIL=you@example.com

OR_PORT=XXX

PT_PORT=XXX

## If needed, you can also activate there an additional variables processing with:

## OBFS4_ENABLE_ADDITIONAL_VARIABLES=1

## followed by defining desired torrc entries prefixed with OBFS4V_

# For example:

## OBFS4V_AddressDisableIPv6=1Next, pull the Docker image, by running :

docker-compose pull obfs4-bridgeAnd finally, to (re-)deploy the container, run :

docker-compose up -d obfs4-bridgeHow to check if your relay is active ?

The first thing you can do is check your logs for an error, if you see none and you see the bandwidth speedtest complete, you can check for your relay on the Tor metrics website.

docker logs obfs4-bridgeTo identify your relay you need your tor bridge hashed identity key, you can find it in the logs, it should look like this.

Your Tor bridge's hashed identity key fingerprint is 'DockerObfs4Bridge AAAABBBBCCCCDDDDEEEE'